Since the Panda update and refreshes, content consolidation projects have been widely undertaken to both lift the effects of Panda and as a preventative measure. This article will show you three risky but popular content strategies, how to diagnose them and tips for how to approach consolidating.

Pages Targeting Keywords with The Same User Intent

Imagine a site architecture that is roughly the following

- super-duper-bargain-floss.biz/products/dental-floss

- super-duper-bargain-floss.biz/products/best-dental-floss

- super-duper-bargain-floss.biz/products/cheap-dental-floss

- super-duper-bargain-floss.biz/products/discount-dental-floss

- super-duper-bargain-floss.biz/products/low-price-dental-floss

- super-duper-bargain-floss.biz/products/bulk-dental-floss

- super-duper-bargain-floss.biz/products/wholesale-dental-floss

- super-duper-bargain-floss.biz/products/spearmint-dental-floss

- super-duper-bargain-floss.biz/products/peppermint-dental-floss

- super-duper-bargain-floss.biz/products/mint-dental-floss

Usually, when I see this strategy, it suggests to me that this is a very aggressive strategy because there is no difference in user intent between

super-duper-bargain-floss.biz/products/cheap-dental-floss

super-duper-bargain-floss.biz/products/discount-dental-floss

super-duper-bargain-floss.biz/products/low-price-dental-floss

or

super-duper-bargain-floss.biz/products/bulk-dental-floss

super-duper-bargain-floss.biz/products/wholesale-dental-floss

or

super-duper-bargain-floss.biz/products/dental-floss

super-duper-bargain-floss.biz/products/best-dental-floss

A visitor that lands on /cheap-dental-floss or /cheap-dental-floss is looking for exactly the same thing. In fact, in most spaces one of these will have way more search volume then the other. At some level, this is even keyword cannibalization, a case where pages overlap so much, that they internally compete for rankings on your site. Remember, Google has become very good at understanding synonymous phrases and I predict that in the future, aggressive strategies like this will hurt more then they help.

How to diagnose

- Run a crawler on your site (you can try the ninja crawler to be able to view this really easily)

- Check to see if there is any synonymous overlap between URL’s

How to fix it

- Consolidate content by grouping content by user intent.

- In my example case above it would be

super-duper-bargain-floss.biz/products/dental-floss

301 redirect:

super-duper-bargain-floss.biz/products/best-dental-floss

super-duper-bargain-floss.biz/products/discount-dental-floss

super-duper-bargain-floss.biz/products/discount-dental-floss

301 redirect:

super-duper-bargain-floss.biz/products/cheap-dental-floss

super-duper-bargain-floss.biz/products/low-price-dental-floss

super-duper-bargain-floss.biz/products/bulk-dental-floss

301 redirect:

super-duper-bargain-floss.biz/products/wholesale-dental-floss

super-duper-bargain-floss.biz/products/spearmint-dental-floss

super-duper-bargain-floss.biz/products/peppermint-dental-floss

super-duper-bargain-floss.biz/products/mint-dental-floss

Sidebar: you may notice that I left /spearmint-dental-floss, /peppermint-dental-floss, and /mint-dental-floss. The reasoning for that is simply that potential visitors may be looking for something different. Someone looking for mint dental floss may be looking for spearmint, peppermint, or wintergreen. Conversely at the more precise level, you can think about how to tailor an onpage experience for someone looking for peppermint dental floss on /mint-dental-floss

URL Parameters and Session ID’s

These content issues are old ones but I still see them all the time. Usually, when I look at an eCommerce site, it is not a matter of if it has URL Parameter based duplicate content, it is usually a case of where.

How to diagnose

A very simple check exists for this: run a snippet of text, usually 7 words in quotes, from every level of your site architecture and see what is returned. A good percentage of webmasters that run this are surprised by weird URL parameters generating duplicate content.

Another great tool to use for this is a crawler tool, such as the ninja crawler tool mentioned prior. A crawler tool is great for identifying weird CMS errors that may be generating URLs and internal site search pages that may be getting indexed.

How To Fix It

Fixing these types of issues varies site by site but it usually involves some combination of robots.txt, rel=next/rel=prev, rel=canonical, noindex.

The Mash-Up

There are only so many ways you can slice and dice content on a web site. Recycling content completely or with use of find and replace can go too far really fast. The difficulty with this type of duplicate content is that whole content strategies are often built upon it. For changes like these, aggressive changes to site architecture are usually involved. When undertaking changes like this, make sure to carefully re-plan your internal linking strategy after you clean up pages and to do a crawl to make sure that redirects/removals were properly implemented.

How To Diagnose it

These sites are usually very large sites with very little content that is unique on pages. Although content on these sites tends to not be duplicate, content tends to be very thin the deeper you go on the site. Generally speaking, many of these types of sites tend to generally have low domain authority and low engagement.

How To Fix It

Many of these types of large sites were hit by Panda. The particular actions that need to be taken tend to vary by site. A fix usually involves fairly extensive content creation combined with a content consolidation strategy that involves identifying and redirecting/removing, depending on your philosophy and the situation, low performing and low quality sections of the site. Also, these types of radical changes involve heavy revisions to your internal linking strategy and to the site architecture as a whole.

Some questions to consider:

Auditing content strategy and deciding if content consolidation is something that needs to be undertaken is like peeling an onion. Here are some questions to consider:

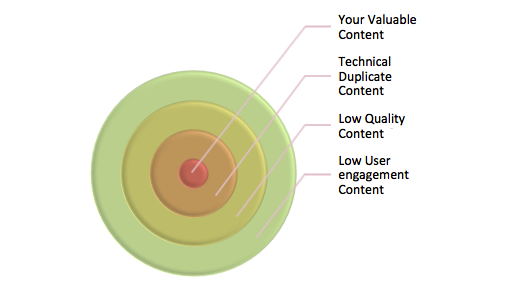

Technical Duplicate Content:

- What do the URL’s on your site generally look like?

- How is the site handling sorting and pagination?

- How is internal site search handled?

Low Quality Content:

- Where is your best content? Is it all balled up on a couple key landing pages or does it extend to deeper pages as well? Does the content have a keyword density that is too high for a particular keyword and is this a persistent pattern across pages?

- Were you using automation as part of your content strategy in the past?

- Look at the pages in Google analytics that get the fewest organic search visitors, is this content worth keeping?

User Engagement:

- Look at the pages with the highest bounce rate.

- Look at your pages by section in analytics, what are the worst preforming sections on your site.

Other Great Resources:

Tips for Consolidating Duplicate Content, Andrew Kaufman

This article covers some good tips for consolidating duplicate content.

Handling Duplicate Content, Ivan Strouchliak, SEOChat

This article covers a lot of interesting concepts, including block level analysis.

31 Responses

Consolidating Content to Futureproof Your SEO: Since the Panda update and refreshes, content consolidation proje… http://t.co/cvUzDKh1

POST: Consolidating content to futureproof your SEO – http://t.co/9YeabYfM

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/cdlvWV9I Three risky but popular content strategies.

Consolidating Content to Futureproof Your SEO – http://t.co/GlqZWa74 #panda

RT @keewood: Consolidating Content to Futureproof Your SEO – http://t.co/GlqZWa74 #panda

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/hRw2lCmk

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/OkgdCAam

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/OnUHEiWA

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/hBTNIWvX

Consolidating Content to Futureproof Your SEO http://t.co/kMBztBSO

Ashley Demers liked this on Facebook.

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/4YX5dlm0

“Consolidating content to futureproof your SEO – http://t.co/KimJ5x18” by ninjas @bnnejn

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/moflTx1R

Interesting read: Consolidating Content to Futureproof Your SEO http://t.co/QpTuwssd

Consolidating Content to Futureproof Your SEO http://t.co/qV0Vtx4F

Consolidating Content to Futureproof Your SEO http://t.co/YN7V6u54 via @NinjasMarketing

There’s a whole slew of Technical URL issues.

Even if you cannot actually “see” them – many exist, simply waiting for someone to link to you and cause the problems.

Things like;

* Double slashes [//] in the filepath, or switching from one space character to another [%20 to + or vice versa].

* Subdomains – the www/non-www/some random subdomain (some servers/sites seem to have wildcard DNS working for some reason).

* Variant DomainNames – people that point different domains to the same site, and don’t setup the redirects.

* The tack-ons … some of the wonderful directory/listing sites out there append their own tracking params to URLs … and you can end up with things like [ref=somesite] on the end of your URL.

And those are just the common issues!

Personally, you should avoid using robots.txt as much as possible in most cases. You will be better served with noindex (at least PR can flow).

Further – you are better off finding the root cause for the issue, and ensuring it’s handled (you’d be amazed how many CMS/Platforms have poorly implemented pagination, tagging, categorisation etc.

On a different note – there is sometimes a spanner in the works of content consolidation … campaign-based landing pages.

Sometimes you have “highly-similar” (cookie cutter) content pages that aren’t there to game the SEs, that aren’t there for ranking – they are there for conversions.

Whether this is from internal click paths, advertising campaigns or affiliate setups … many will have various pages with variations of the content.

In such cases – you should have a “default” … one page that is prefered. You can then simply use the Canonical Link Element or NoIndex on the variants.

RT @SERP: Consolidating Content to Futureproof Your SEO http://t.co/IDZszoeX at @NinjasMarketing

RT @kim_cre8pc RT @SERP: Consolidating Content to Futureproof Your SEO http://t.co/UNlLXvDO at @NinjasMarketing

Kim Kopp Krause Berg liked this on Facebook.

I think robots.txt still has a place in the universe, for example for cleaning up search results pages getting indexed, etc. But I agree with you on the whole, that one ought to avoid using robots.txt, in many cases.

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/mbxJofoQ Nice article from @bnnejn

“@stuntdubl: Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/RSub816r Nice article from @bnnejn”

Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/fISAQz3C

RT @maryselapointe: Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/fISAQz3C

RT @stuntdubl: Consolidating Content to Futureproof Your SEO @NinjasMarketing http://t.co/mbxJofoQ Nice article from @bnnejn

Improving SEO with the new Google Panda update http://t.co/5HSoUyo3

Consolidating Content to Futureproof Your SEO #InboundMarketing #SEO http://t.co/RPVv6lk8 http://t.co/v95hYNOJ

Lola Falé Agostinho Dasilva liked this on Facebook.

Instant Sales Letters liked this on Facebook.

Comments are closed.